I have a large folder (3000GB on an enterprise server). I need to zip those and make parts of 100GB each.

I did look into these articles :-

askubuntu.com

askubuntu.com

But they don't support splitting into multiple parts.

I did look into these articles :-

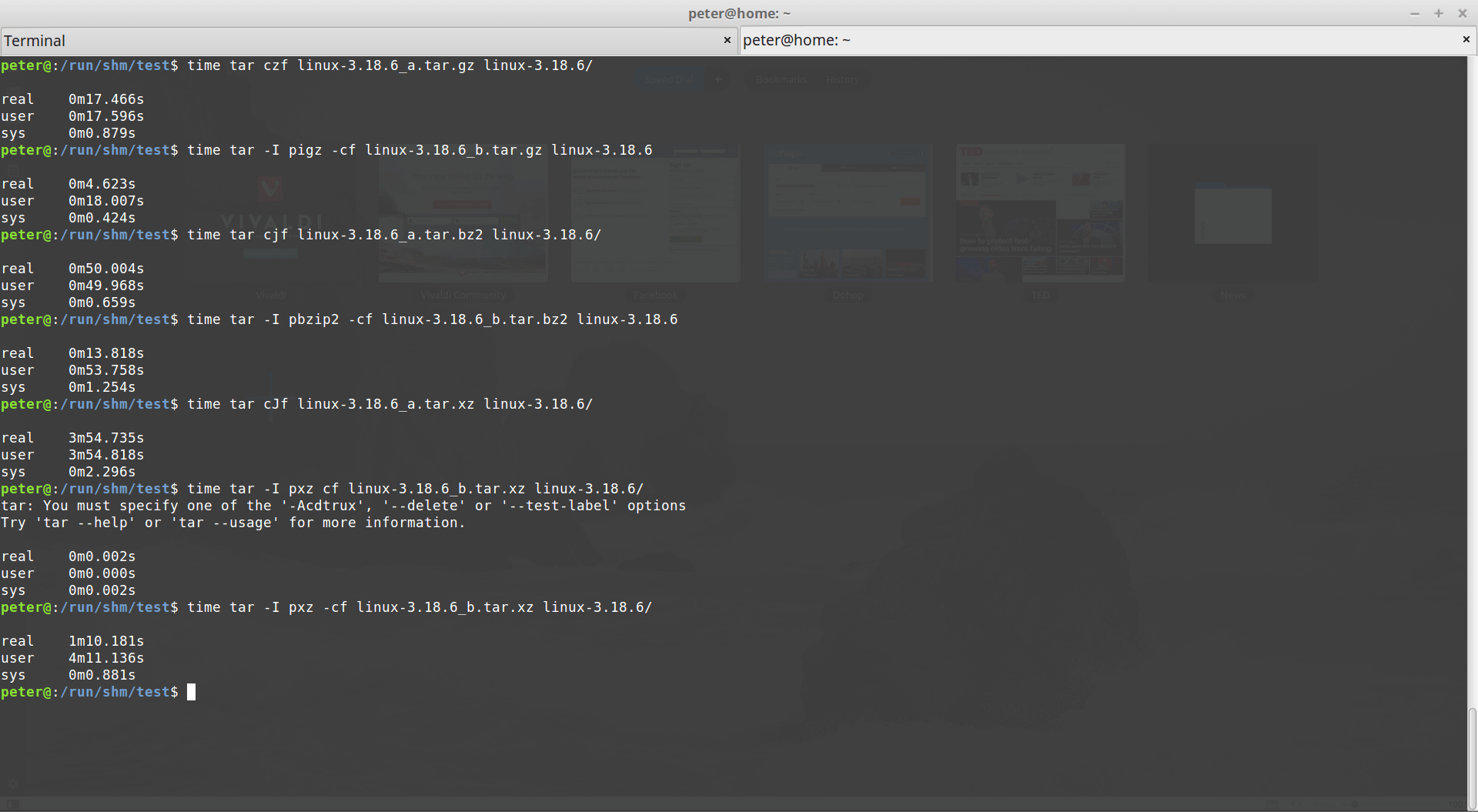

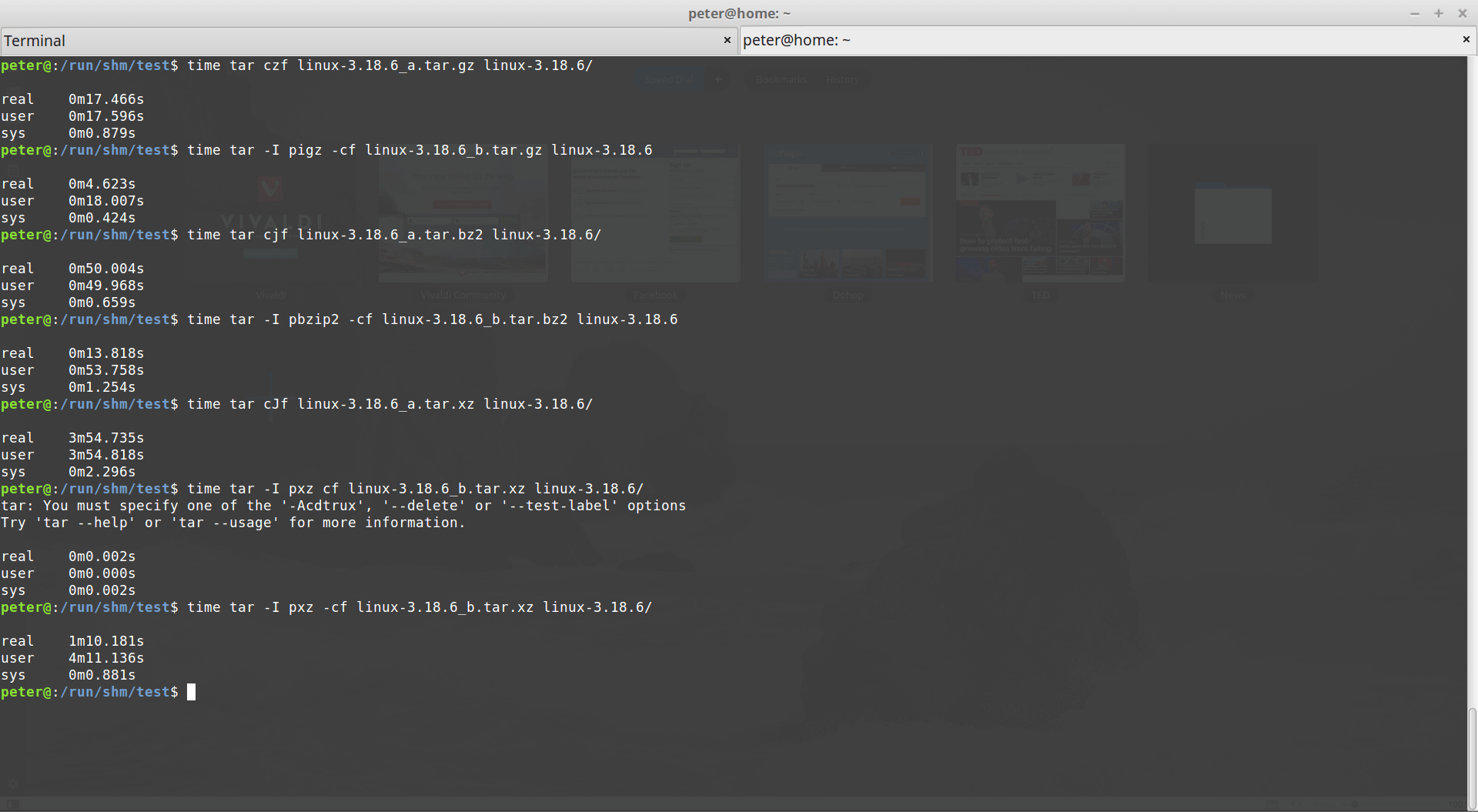

Use multiple CPU thread/core to make tar compression faster - Peter Dave Hello's Blog

On many unix like systems, tar is a wide … 閱讀全文 →

www.peterdavehello.org

Multi-Core Compression tools

What compression tools are available in Ubuntu that can benefit from a multi-core CPU.

But they don't support splitting into multiple parts.